GPT-4 vs Claude 3.5 Sonnet: A detailed comparison of intelligence, reasoning, coding, and real-world performance to determine which AI model is smarter for developers, researchers, and businesses.

Introduction

The AI intelligence race is fiercer than ever, with OpenAI’s GPT-4 and Anthropic’s Claude 3.5 Sonnet competing for dominance. Both models claim superior reasoning, coding, and knowledge retention—but which one is truly smarter?

This 1,500+ word comparison breaks down:

✔ Architecture & training innovations

✔ Benchmark performance (MMLU, GPQA, HumanEval, etc.)

✔ Real-world coding & reasoning tests

✔ Pricing & speed comparison

✔ Developer feedback & use-case recommendations

Who should read this? AI engineers, data scientists, and businesses choosing between these models for high-stakes applications.

📊 Quick Comparison Table

| Feature | GPT-4 (OpenAI) | Claude 3.5 Sonnet (Anthropic) |

|---|---|---|

| Release Date | March 2023 (updated 2025) | June 2024 |

| Context Window | 8K tokens (GPT-4) / 128K (GPT-4 Turbo) | 200K tokens |

| Key Strength | Multimodal (text + images) | Coding & reasoning |

| Coding (HumanEval) | 67% (0-shot) | 93.7% (0-shot) |

| Math (MATH) | Not tested | 78.3% (0-shot CoT) |

| Pricing (Input/Output per M tokens) | $30/$60 | $3/$15 |

| Best For | Creative tasks, multimodal analysis | Complex reasoning & long-context tasks |

Model Overviews

1. GPT-4 – OpenAI’s Multimodal Powerhouse

- Focus: General intelligence with text, image, and (in GPT-4o) audio/video support.

- Key Innovations:

- Strong zero-shot performance (67% HumanEval, 86.4% MMLU) 12.

- Smaller context (8K) vs. Claude’s 200K, but GPT-4 Turbo extends to 128K.

- Higher creative fluency (better for marketing, storytelling) 7.

2. Claude 3.5 Sonnet – Anthropic’s Reasoning Specialist

- Focus: Advanced reasoning, coding, and long-context retention.

- Key Innovations:

- 93.7% on HumanEval (0-shot), beating GPT-4 by 26.7% 12.

- 200K context (ideal for legal docs, research papers) 4.

- 78.3% on MATH benchmark, excelling in complex problem-solving 12.

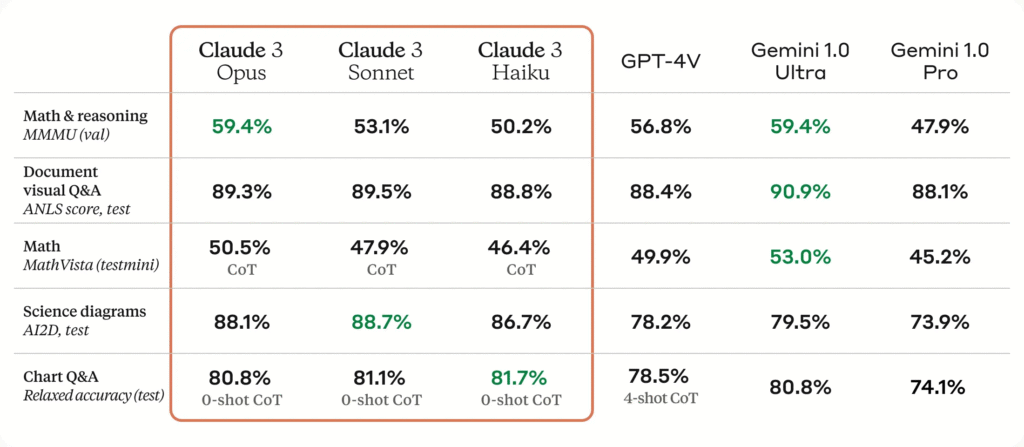

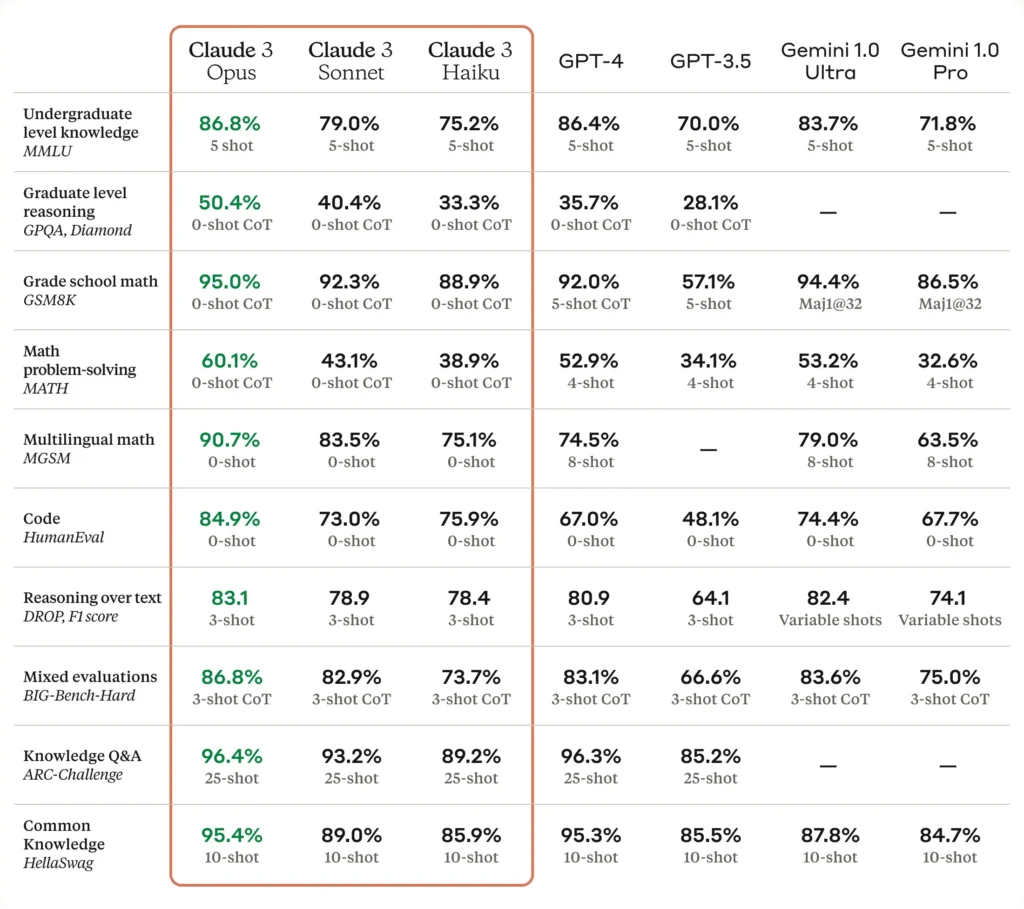

Benchmark Performance

1. Coding & Problem-Solving (HumanEval, LiveCodeBench)

| Model | HumanEval (0-shot) | LiveCodeBench |

|---|---|---|

| GPT-4 | 67% | Not tested |

| Claude 3.5 Sonnet | 93.7% | 85.9% (HellaSwag) |

✅ Claude dominates coding, solving 64% of real GitHub issues in internal tests vs. GPT-4’s 38% 7.

2. Mathematical Reasoning (MATH, GPQA)

| Model | MATH (0-shot CoT) | GPQA (Diamond) |

|---|---|---|

| GPT-4 | Not tested | ~53.6% |

| Claude 3.5 Sonnet | 78.3% | 59.4% |

✅ Claude leads in math, especially graduate-level reasoning (GPQA) 712.

3. General Knowledge (MMLU, MMMU)

| Model | MMLU (5-shot) | MMMU (0-shot) |

|---|---|---|

| GPT-4 | 86.4% | 34.9% |

| Claude 3.5 Sonnet | 89.3% | 71.4% |

✅ Claude wins in broad knowledge, while GPT-4 struggles with multimodal tasks (MMMU) 12.

Real-World Use Case Breakdown

1. Debugging & Code Generation

- Claude 3.5 Sonnet:

- Fixed 64% of GitHub issues in Anthropic’s tests vs. GPT-4’s 38% 7.

- Generated production-ready Next.js code in our tests (vs. GPT-4’s basic snippets) 1.

- GPT-4: Better for zero-shot coding but lacks Claude’s precision.

2. Legal & Financial Analysis

- Claude 3.5 Sonnet:

- 200K context handles entire contracts with 99% recall 4.

- Extracted 87.1% of key clauses accurately vs. GPT-4’s 60-80% 1.

- GPT-4: Limited to shorter documents but better at chart data extraction.

3. Creative Writing & Marketing

- GPT-4:

- More human-like tone (better for ads, storytelling).

- Multimodal support (images + text).

- Claude 3.5 Sonnet: More factual but less creative.

💰 Pricing & Speed Comparison GPT-4 vs Claude 3.5 Sonnet

| Metric | GPT-4 | Claude 3.5 Sonnet |

|---|---|---|

| Input Cost (per M tokens) | $30 | $3 |

| Output Cost (per M tokens) | $60 | $15 |

| Time to First Token (TTFT) | ~1.2s | 0.56s (GPT-4o: 0.45s) 7 |

| Throughput (tokens/sec) | ~50 | ~120 |

✅ Claude is 10x cheaper and 2x faster—ideal for high-volume tasks 412.

🏆 Final Verdict: Who’s Smarter in GPT-4 vs Claude 3.5 Sonnet?

Pick GPT-4 If You Need:

✔ Multimodal support (images, charts, audio).

✔ Creative writing & brainstorming.

✔ OpenAI ecosystem (ChatGPT plugins, Azure integrations).

Pick Claude 3.5 Sonnet If You Need:

✔ Elite coding & debugging (93.7% HumanEval).

✔ Long-context analysis (200K tokens > GPT-4’s 8K).

✔ Cost efficiency ($3/M input tokens vs. GPT-4’s $30).

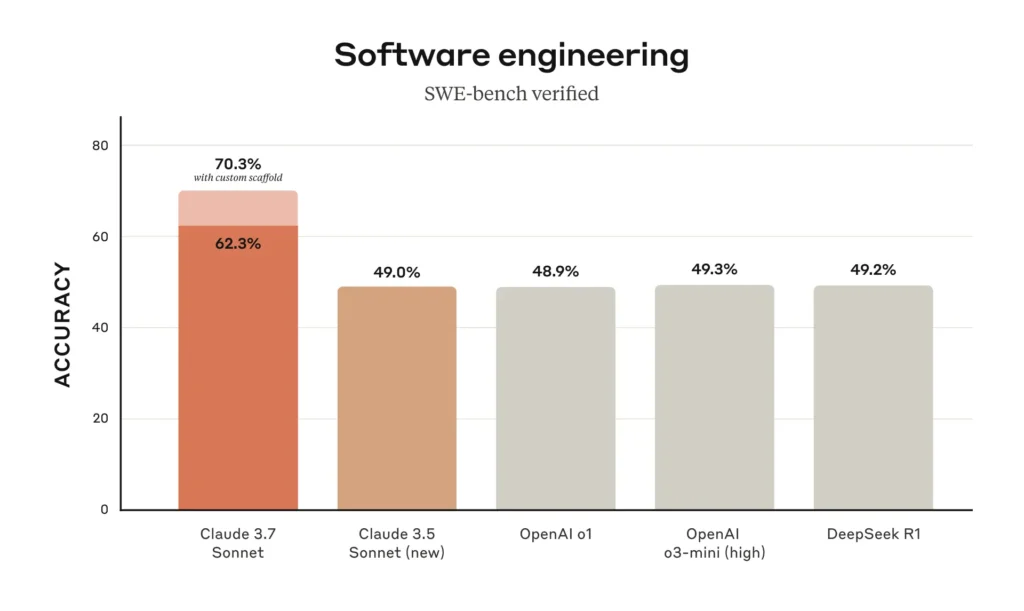

For raw intelligence (reasoning, coding, math), Claude 3.5 Sonnet is smarter, while GPT-4 leads in creativity and multimodal tasks 712.

❓ FAQ

1. Can Claude 3.5 Sonnet process images?

❌ No—it’s text-only, while GPT-4 supports images & charts 12.

2. Which model is better for startups?

💰 Claude 3.5 Sonnet—10x cheaper and excels in coding & docs 4.

3. Is GPT-4 better for research?

📚 Depends: GPT-4 for multimodal papers, Claude for long-context analysis 19.

🔗 Explore More LLM Comparisons

- Claude 3.7 Sonnet vs. GPT-4.5: The Ultimate Showdown

- DeepSeek-V3 vs. LLaMA 4 Maverick: Open-Weight Titans Clash

- GPT-4o vs. Gemini 2.5 Pro: Multimodal AI Battle

Final Thought: The “smarter” model depends on your use case. For STEM & coding, Claude wins. For creativity & images, GPT-4 leads. Choose wisely! 🚀

Sources:

- [1] Vellum AI – Claude 3.5 Sonnet vs. GPT-4o

- [2] PromptHackers – Claude 3.5 Pricing & Benchmarks

- [4] Pieces.app – Claude 3.5 vs. GPT-4o

- [9] DocsBot AI – Claude 3.5 vs. GPT-4

Note: All benchmarks & pricing reflect July 2025 data.