Intro paragraph (quick answer / featured snippet): To build a high‑performance AI agent benchmarking suite, create a repeatable, automated evaluation pipeline that (1) defines representative tasks and success metrics, (2) runs parallelized, multi‑agent experiments, (3) collects standardized telemetry (accuracy, latency, cost, token usage), and (4) integrates with CI for regression detection.

This post gives a step‑by‑step outline to design, build, measure, and operate an AI agent benchmarking capability optimized for AI agent benchmarking, model evaluation suite requirements, skill testing frameworks, and autonomous agent QA. It focuses on practical architecture, measurable metrics, and operational workflows so product managers, ML engineers, QA leads, skill authors, and dev‑tool builders can reliably measure agent-level behavior, catch regressions, and make cost/efficiency tradeoffs explicit.

For concrete vendor examples and early adopter guidance, see Anthropic’s writeup on skill‑creator evals (parallel multi‑agent benchmark mode) and the companion GitHub skills repo for runnable examples and benchmark.py samples [1][2].

Background

Why AI agent benchmarking matters

AI agent benchmarking matters because agents are composed software systems—not just models. A model evaluation suite tests a model’s responses to prompts; AI agent benchmarking evaluates agent-level workflows, orchestration, and multi‑step behavior in production‑like environments. Good benchmarking:

- Distinguishes agent capabilities across model versions and skill sets.

- Detects regressions early (e.g., changes in model behavior that break a skill trigger).

- Guides skill lifecycle decisions: when a capability‑uplift skill is redundant because the base model improved, or when an encoded‑preference skill still needs enforcement.

- Validates end‑to‑end autonomous agent QA: retries, tool usage, and failure modes.

Think of an AI agent benchmark as the equivalent of an integration and end‑to‑end test suite for microservices: it verifies behaviors that unit tests (model evals) cannot capture. Anthropic’s upgraded skill‑creator added runnable evals and benchmark mode that enable CI‑style regression testing for Agent Skills—this mirrors modern software testing and reduces manual validation time [1].

Key terms and components (glossary)

- Benchmarking harness / test runner: the process controller that schedules and executes test cases.

- Evaluation dataset: canonical tasks, edge cases, adversarial scenarios, and gold labels.

- Orchestrator / controller: parallelism, retries, distributed agent runners.

- Telemetry & metrics: accuracy, pass@k, BLEU/ROUGE where applicable, latency, tokens, cost.

- Test oracle and scoring functions: deterministic checks or fuzzy semantic scorers for open responses.

- Skill metadata and registration: skill endpoints, triggers, permissioning, and expected outputs.

For practical examples and community tooling, review the Anthropic skills repository and documentation which demonstrate how evals are structured and run in benchmark mode [2][1].

Trend

Current trends shaping AI agent benchmarking

A few converging trends are reshaping how teams approach AI agent benchmarking:

- Parallel multi‑agent execution and explicit benchmark modes to reduce wall‑clock time for large datasets.

- Movement from ad‑hoc manual checks to CI‑integrated, repeatable evals—smoke tests on PRs and full benchmarks on release candidates.

- Telemetry expanding beyond accuracy to include token usage, API cost, throughput, memory, and failure modes.

- Growth of opinionated skill testing frameworks and community repos that provide templates, scoring plugins, and vendor adapters.

Analogy: if model evaluation is a unit test framework (fast, deterministic), agent benchmarking is a distributed integration testbed—more complex, higher latency, and requiring orchestration, but essential for release confidence.

Evidence & impact

Early adopters reporting parallel skill evals have observed a 30–40% reduction in testing time and a material drop in manual validation work thanks to vendor‑supplied benchmark modes and example scripts [1][2]. Benchmarking is also broadening to include deployment‑oriented metrics: API throttling, concurrent request throughput, memory footprint, and failure distributions.

Why this matters for teams: faster release cycles with safer model/skill updates, clearer criteria for retiring capability‑uplift skills, and the ability to gate model upgrades with regression detectors in CI pipelines.

Insight

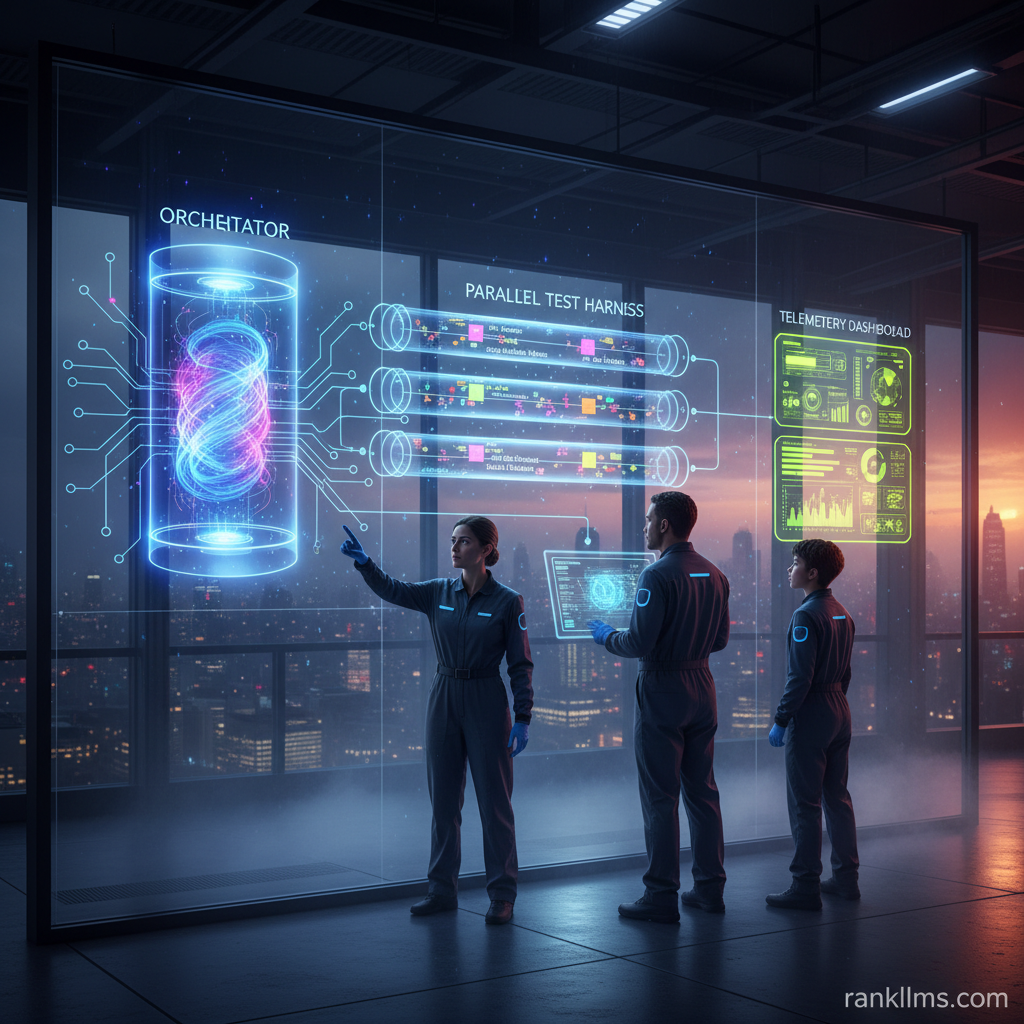

High‑level architecture: components of a high‑performance suite

A practical high‑performance AI agent benchmarking architecture contains:

- Ingest: scenario & dataset manager (versioning, synthetic generator, PII sanitization).

- Orchestrator: scheduler supporting parallel runs, sharding, retries, and distributed runners.

- Environment & simulators: sandboxed contexts for autonomous agent QA that emulate APIs, web actions, and side effects.

- Evaluation engine: deterministic and fuzzy scoring functions, oracles, and a human‑in‑the‑loop adjudication pipeline.

- Telemetry collector: structured logs for latency, tokens, memory, costs, and success/failure signals.

- Dashboard & reporting: leaderboards, regression alerts, and re‑baseline tools.

Minimal example evaluation matrix (what to measure)

- Correctness: pass/fail, accuracy, F1, task success rate.

- Robustness: adversarial case performance, stability under noise.

- Efficiency: average latency, concurrency throughput.

- Cost: tokens per task, API spend per scenario.

- Maintainability: flaky test rate, time‑to‑detect regressions.

Step‑by‑step implementation (featured snippet friendly)

1. Define goals and success metrics (e.g., 95% task success, <2s median latency).

2. Curate and version benchmark datasets: canonical tasks, edge cases, adversarial inputs, gold answers.

3. Build a test harness/orchestrator to run tasks in parallel across agents and model versions.

4. Implement standardized scoring functions and oracles (automated + human fallback).

5. Collect telemetry: latency, tokens, memory, fail modes; store logs for reproducibility.

6. Integrate with CI/CD for smoke tests on commits and full runs on release candidates.

7. Dashboard, regression alerts, and periodic re‑baselining.

Pseudocode: simple parallel harness (conceptual)

python

from concurrent.futures import ThreadPoolExecutor

def run_case(agent, case):

response = agent.invoke(case.input)

metrics = score(response, case.gold)

telemetry = collect_telemetry(response)

return {\”case_id\”: case.id, \”metrics\”: metrics, \”telemetry\”: telemetry}

with ThreadPoolExecutor(max_workers=20) as ex:

futures = [ex.submit(run_case, agent, case) for case in cases]

results = [f.result() for f in futures]

Aggregate results and compute pass rates, token usage, latency distributions

Best practices for datasets & scoring

- Mix production traces, curated edge cases, and synthetic adversarial examples; seed generators for reproducibility.

- Label gold outputs and define fuzzy match thresholds for open responses (embedding similarity or rubric scoring).

- Use deterministic oracles where possible; deploy human adjudication for ambiguous cases.

- Periodically re‑baseline datasets—remove deprecated cases once base models match capability‑uplift skills.

Integrating skill testing frameworks and autonomous agent QA

Wrap skills as testable endpoints and register metadata in the harness. Use vendor‑provided benchmark modes (e.g., Anthropic’s skill evals) to run parallel multi‑agent experiments and speed validation cycles [1][2]. Automate flaky‑skill notifications and provide diffs to skill authors to accelerate fixes.

Operational considerations include cost control (sample vs full runs), stability (seed randomness, capture env metadata), and security (sanitize PII). Instrument token caps and prioritized test subsets to control spend.

Forecast

Near‑term (6–12 months)

Expect wider adoption of vendor‑supplied eval toolkits with benchmark modes and parallel runners, plus more community repositories with sample scripts (benchmark.py). Teams will ship CI‑ready test suites for skill authors that automatically gate model and skill updates. Tooling will increasingly surface token/cost metrics as first‑class outcomes.

Mid‑term (12–24 months)

We’ll see standardized agent benchmarking formats and public leaderboards for common agent tasks (akin to GLUE/SuperGLUE but for agents). Cost‑aware benchmarks and token‑usage optimization will be built into evaluation suites and may become a competitive differentiator for model providers.

Team action plan & timeline (quarterly roadmap)

- Q1: Define KPIs, curate initial dataset, and implement a minimal harness.

- Q2: Add telemetry, parallel execution, and CI integration for PR smoke/regression runs.

- Q3: Expand datasets, add human‑in‑the‑loop review, and roll out dashboards/alerting.

- Q4: Automate re‑baselining, implement cost‑optimization routines, and publish internal benchmark reports.

Future implication: as tooling matures, autonomous agent QA will move from experimental to operational practice, enabling predictable release cadences and clear cost/benefit tradeoffs when choosing between model upgrades or skill maintenance.

CTA

Practical next steps (checklist)

- [ ] Define the top 3 agent tasks and success metrics for AI agent benchmarking.

- [ ] Version‑control an initial benchmark dataset with gold answers.

- [ ] Implement a parallel test harness and schedule nightly or PR runs.

- [ ] Start collecting token and latency telemetry; add cost tracking.

- [ ] Integrate a simple dashboard and set regression alert thresholds.

Resources & how I can help

- Reference implementations and examples: Anthropic’s skill‑creator blog and repository show how runable evals and benchmark mode are structured—use these as a starting point for integrating parallel multi‑agent evals into your CI pipeline [1][2].

- If you want, I can draft a starter repo scaffold, a GitHub Actions CI template for nightly benchmarks, or a sample scoring rubric tailored to your agent tasks—tell me which and I’ll produce artifacts you can drop into a repo.

Invitation

Leave a comment describing the main challenge you face with AI agent benchmarking (dataset drift, flaky skills, cost control, CI integration, etc.) and I’ll suggest a concrete change you can implement within a week.

References

- Anthropic — Improving Skill Creator: Test, Measure, and Refine Agent Skills: https://claude.com/blog/improving-skill-creator-test-measure-and-refine-agent-skills [1]

- Anthropic skills repository (examples and benchmark.py): https://github.com/anthropics/skills [2]