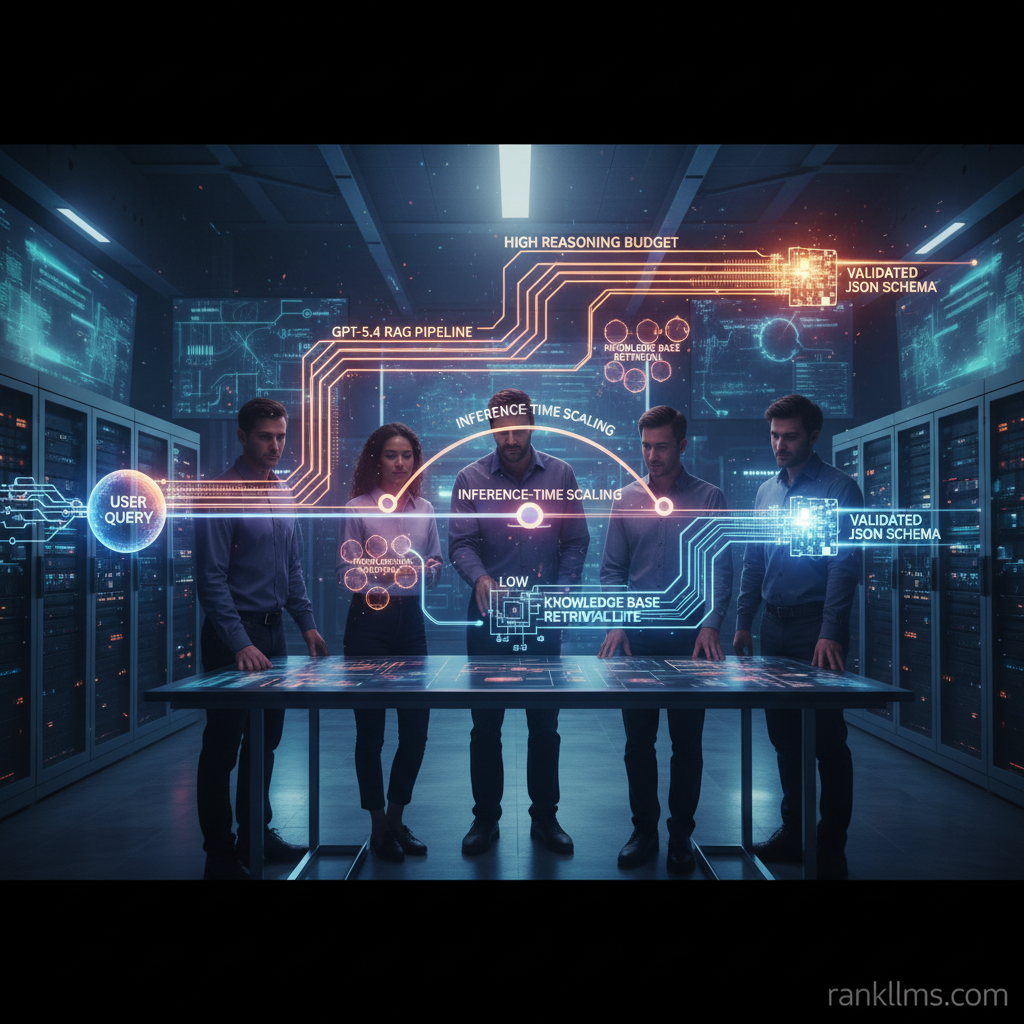

Inference-time scaling is a technique that dynamically varies the amount of computation or reasoning effort a model uses at inference to match task difficulty and latency/compute constraints — improving perceived accuracy and AI compute efficiency when used correctly (e.g., GPT-5.4 reasoning effort tuning).

Quick answer (featured‑snippet friendly): Inference-time scaling adjusts how much reasoning budget a model spends on each call—varying chain-of-thought depth, early-exit thresholds, ensemble usage, or retrieval iterations—based on runtime signals about input difficulty or confidence. When tuned (for example, with GPT-5.4 reasoning effort), it reduces wasted compute on easy queries while preserving high accuracy for hard ones.

Key takeaways

- What it is: Dynamically changing inference cost based on input complexity.

- Why it matters: Balances latency, cost, and accuracy for production AI.

- Where it’s used: Retrieval-augmented generation (RAG), multi-step chains, and adaptive polling (notably explored with GPT-5.4 and through OpenAI o-series evolution).

- Quick benefit: Up to 20–50% less wasted compute on typical systems that successfully implement adaptive scaling in the first year.

One-line hook: How can product teams and engineers use inference-time scaling to get more from GPT-5.4 without paying for full reasoning on every call?

Background

What is inference-time scaling (short definition)

Inference-time scaling adjusts the reasoning effort (number of steps, chain-of-thought depth, ensemble size, or conditional compute) used by a model at runtime based on signals about input difficulty or confidence. Practically this means not treating every API call as equal: some calls receive a quick, low-cost pass; harder calls trigger progressively heavier compute until a confidence or cost threshold is met.

Analogy: think of a searchlight with variable beam intensity—don’t burn a high-power beam to read a poster at arm’s length; ramp up only when the text is far away or obscured.

Citations: foundational ideas around adaptive computation date back to Adaptive Computation Time (Graves, 2016) and early-exit networks like BranchyNet; see https://arxiv.org/abs/1603.08983 and https://arxiv.org/abs/1709.01698.

How it differs from training-time scaling

- Training-time scaling increases model capacity or dataset size to improve base performance (bigger models, more compute up front).

- Inference-time scaling keeps model capacity fixed but varies runtime compute per example. This is a software/infra pattern rather than a model-size trade.

- Trade-offs: inference-time scaling buys flexibility and operational cost savings without requiring retraining; training-scale improves worst-case accuracy but at high capital expense.

Key mechanisms used in practice

- Early exit / adaptive-depth networks: let shallow layers decide when output is good enough.

- Confidence-based reranking and re-querying: use logit gaps, calibrated probabilities, or verifier models to decide escalation.

- Variable chain-of-thought length and selective grounding: generate short thoughts for well-grounded prompts; expand when evidence is low.

- Budget-aware ensembling and retrieval iteration: start with a low-cost candidate and only run expensive ensemble or extra retrieval when needed.

Why this matters for GPT-5.4 and beyond

GPT-5.4 reasoning effort advances—improving calibration and exposing modular reasoning hooks—make inference-time scaling much more practical. As OpenAI evolves o-series families toward richer runtime signals, product teams can rely on model-provided confidence, progressive decoding APIs, and controllable reasoning budgets to implement adaptive pipelines more robustly.

For a hands-on example of integrating these ideas with a leading RAG toolchain, see Windsurf’s writeup on GPT-5.4 and RAG workflows: https://windsurf.com/blog/gpt-5.4.

Trend

Current industry signals

Engineering posts, SDK updates, and platform integrations show a clear shift from “single-cost” API calls toward adaptive-cost strategies. Public integrations—such as Windsurf’s coverage of GPT-5.4—demonstrate practical RAG + adaptive reasoning patterns and recommended defaults for escalation and provenance handling (Windsurf integration: https://windsurf.com/blog/gpt-5.4). Meanwhile, community toolkits like LangChain are codifying multi-pass retrieval and verifier layers that pair naturally with inference-time scaling (see LangChain docs).

Large providers are also exposing more runtime hooks: confidence metrics, partial decoding streams, and per-call compute flags—signals consistent with the broader OpenAI o-series evolution toward operational flexibility.

Drivers accelerating adoption

- Rising AI compute efficiency pressure: inference costs dominate production budgets.

- User expectations for both low latency and high-quality RAG outputs.

- Need for explainability and provenance in PKBs and assistants, which often require selective deeper reasoning.

- Improved model internals (better calibration and modular primitives) that make safe escalation possible.

Typical adoption patterns

1. Pilot: start with confidence-based retries for low-stakes conversational or classification endpoints.

2. Staging: add multi-pass retrieval + adaptive reasoning for PKBs and document Q&A.

3. Production: implement variable-depth reasoning, cost-aware ensembling, and monitoring for SLA-sensitive flows.

These patterns mirror how engineering orgs adopt feature flags and progressive rollouts: begin simple, measure, then add complexity where it matters.

Insight

Practical recipes: How to implement inference-time scaling with GPT-5.4

Below are three practical, production-ready recipes that map well to GPT-5.4 reasoning effort capabilities.

- Recipe 1 — Confidence-first flow (minimal change)

1. Call the model with a constrained reasoning budget (short chain-of-thought, limited tokens).

2. Read model confidence signals (logit gap, scalar confidence) or simple self-check question.

3. If confidence < threshold, escalate: re-run with a deeper chain-of-thought or larger context window.

- Benefits: fast wins, low infra changes.

- Recipe 2 — RAG + adaptive reasoning for PKBs

1. Retrieve top-k documents via embeddings.

2. Evaluate retrieval quality (semantic similarity, provenance sparsity).

3. If evidence is high-quality, use a short chain-of-thought and synthesize a concise answer with citations.

4. If evidence is sparse/conflicting, run a deeper reasoning pass, ask disambiguating queries, expand retrieval scope, and then synthesize.

- This pattern is especially useful for knowledge workers—fewer escalations reduce cost while preserving trust.

- Recipe 3 — Cost-aware ensembling

- Run a fast lightweight verifier (small model or heuristic) first; only run the expensive ensemble (multiple model calls, full chain-of-thought) when the verifier signals uncertainty.

- Example: use a small local model to validate high-frequency queries, escalate rare or ambiguous ones to GPT-5.4’s higher reasoning effort.

Signals to trigger scaling (feature list)

- Model-provided confidence scores or logit gaps

- Retrieval score thresholds and provenance sparsity

- Query complexity heuristics (length, ambiguity markers, temporal anchors)

- Business rules (SLA/latency targets, user tier, cost budgets)

Measuring success (metrics optimized for AI compute efficiency)

- Accuracy or helpfulness per CPU / per-dollar (compute-normalized utility)

- P95 and P99 latency under variable loads

- Fraction of calls escalated and average cost per session

- User satisfaction and error rate on high-stakes queries

- Monitor for over-escalation to avoid runaway cost

Pitfalls and how to avoid them

- Over-escalation: tune thresholds conservatively; use A/B experiments to find sweet spots.

- Hidden latency spikes: parallelize lightweight verification and pre-warm heavier paths for critical SLAs.

- Explainability loss: always attach provenance and a short reasoning summary when escalating; preserve audit logs.

- Calibration drift: retrain or recalibrate confidence thresholds periodically as data or model versions change.

Practical note: implement feature flags and real-time cost accounting so teams can see which paths drive spend and accuracy—then iterate.

Forecast

Short-term (6–12 months)

- APIs and SDKs will expose per-call reasoning budgets and confidence hooks more broadly; early SDKs already reflect this trend.

- Early adopter integrations (PKBs, agents) will prove cost savings and better UX; Windsurf’s GPT-5.4 integration is a concrete early example (https://windsurf.com/blog/gpt-5.4).

- Expect many teams to adopt simple two-pass confidence-first flows as a low-friction starting point.

Medium-term (1–2 years)

- Standardized patterns (confidence-first, RAG-adaptive, budgeted ensembles) will be packaged into ML infra stacks and templates.

- Tooling: dashboards for per-path compute, escalation analytics, and default escalation templates.

- Organizations will report measurable reductions in cost per query as more systems adopt inference-time scaling.

Long-term (3+ years)

- Models and hardware will increasingly co-design for adaptive compute: chips and accelerators that support early exits or fine-grained conditional execution.

- OpenAI o-series evolution (and competitors) will likely include native adaptive-depth models and richer runtime controls, making inference-time scaling a first-class capability.

- New product tiers may sell dynamic reasoning guarantees instead of fixed token quotas.

Concrete numeric forecast (snippet-ready bullets)

- Expect a 20–50% reduction in average inference cost for systems that correctly implement adaptive scaling within the first year.

- Escalation rates for well-tuned systems typically stabilize below 10% for routine queries and 30–40% for mixed-complexity workloads.

CTA

What to do next (actionable steps for early adopters)

- Experiment: implement a confidence-first two-pass flow on a subset of endpoints (start with low-stakes queries).

- Measure: track cost-per-answer, escalation rate, P95 latency, and user satisfaction.

- Integrate: try Windsurf integration with GPT-5.4 for a practical RAG + adaptive reasoning example and recommended defaults (Windsurf integration: https://windsurf.com/blog/gpt-5.4).

Resources & further reading

- Graves, A., Adaptive Computation Time for Recurrent Neural Networks — foundational adaptive compute concept (https://arxiv.org/abs/1603.08983).

- BranchyNet and early-exit literature for fast inference strategies (https://arxiv.org/abs/1709.01698).

- LangChain docs for practical RAG and verifier patterns: https://langchain.readthedocs.io/.

- Windsurf’s GPT-5.4 engineering blog for hands-on RAG examples: https://windsurf.com/blog/gpt-5.4.

Lead magnet / product CTA

Download: “Inference-time scaling checklist for GPT-5.4” — a compact repo + checklist containing:

- Recommended thresholds and telemetry schema

- Example two-pass flow code snippets

- Escalation templates and monitoring dashboard queries

Sign up for a demo or freemium trial to test with your PKB or assistant workflows.

Closing one-liner for meta/SEO

Inference-time scaling is the practical lever product teams can use today to balance accuracy and AI compute efficiency while preparing for the next generation of models (OpenAI o-series evolution) and integrations like Windsurf.

Related reading: for a deeper dive into PKB and RAG architectures that pair well with inference-time scaling, see the Windsurf article and LangChain resources linked above.