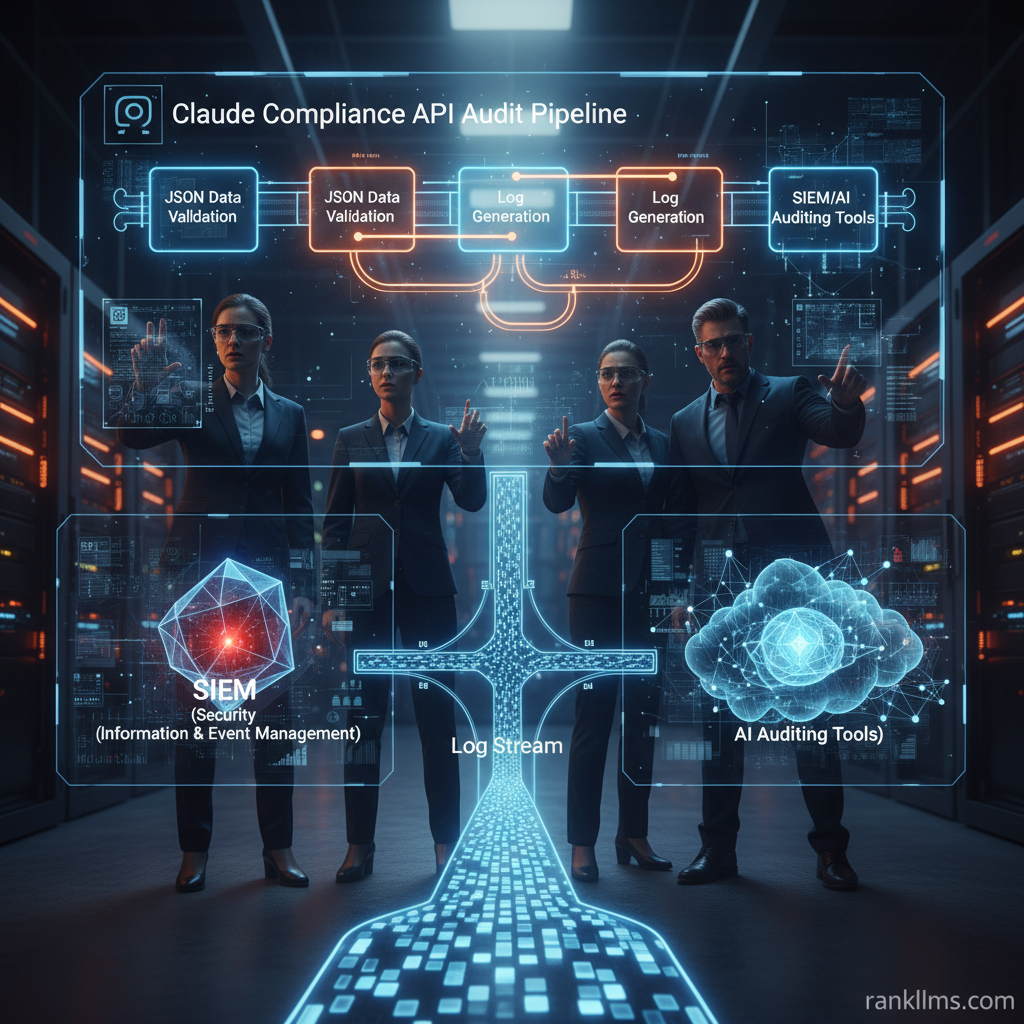

Enterprises building governance around LLM usage need practical, technical guidance to convert streaming model telemetry into audit-ready evidence. This post walks through a focused audit playbook using the Claude Compliance API to centralize platform-activity logging, normalize events, and feed AI auditing tools and SIEMs for regulatory AI compliance and better Anthropic enterprise security. The Quick Audit Summary below gives an at-a-glance checklist you can implement in days; the rest of the article expands each step into an operational framework, integration patterns, common pitfalls, and a 90‑day rollout plan.

Quick audit summary (Featured Snippet)

1. Enable Claude Compliance API logging and set retention policies.

2. Collect platform activity (calls, responses, metadata) into your SIEM or secure storage.

3. Normalize audit records and map fields to regulatory requirements (e.g., GDPR, HIPAA, SOC 2).

4. Run automated checks and anomaly detection using AI auditing tools.

5. Produce evidence-backed reports and retain them according to enterprise policy.

Why this matters

- Regulatory traceability: The Claude Compliance API provides structured activity events that map directly to audit controls, making it easier to show who accessed model outputs and what data was involved.

- Security posture: When combined with RBAC, encryption, and endpoint logging, Claude audit streams become part of Anthropic enterprise security telemetry, enabling faster incident investigation.

- Operational efficiency: Instead of ad-hoc log pulls, standardized events accelerate compliance reporting and reduce manual forensic work.

Who should use this guide

- Security engineers and compliance officers implementing regulatory AI compliance.

- DevOps and SRE teams integrating audit pipelines into CI/CD and observability stacks.

- Privacy teams tracking data access and model behavior for data subject requests or breach investigations.

What you’ll get from this post

A practical, step-by-step audit outline that integrates Claude Compliance API with common AI auditing tools (for example, anomaly detectors and prompt-scanning ML models), SIEMs, and GRC systems. The guidance is platform-agnostic but references Anthropic’s native compliance output format and recommended mappings so you can scale from PoC to enterprise-grade logging quickly. For an official reference on Claude’s audit capabilities, see the product blog (https://claude.com/blog/claude-platform-compliance-api).

Background

What is the Claude Compliance API?

The Claude Compliance API is a platform-facing telemetry endpoint that emits structured events for model interactions: requests, responses (or response hashes), policy flagging, authentication metadata, and service-level events. Its primary purpose is to centralize platform-activity logging in a format suitable for enterprise governance — not just raw request dumps but normalized records intended for ingestion into SIEMs, log lakes, or secure object stores. Think of it as an application-specific audit logger that outputs the building blocks auditors expect: who, what, when, and where, plus contextual signals for why a model behaved a certain way.

Where it fits in Anthropic enterprise security

- Authentication & RBAC: The Compliance API complements identity controls by tying model usage records to authenticated principals and service accounts.

- Encryption & DLP: Audit streams operate alongside encryption-in-transit and at-rest; DLP systems should ingest events to correlate flagged content (e.g., potential exfiltration) with user and session metadata.

- Endpoint & Network Monitoring: Claude audit logs augment network telemetry by revealing model-level events that network logs cannot see (for example, a prompt that contains sensitive tokens). Together they give a fuller picture for detection and response.

Audit data from Claude augments existing telemetry by supplying model-specific events that are otherwise invisible to traditional security tooling. For implementation guidance and example schemas, see Claude’s platform compliance announcement (https://claude.com/blog/claude-platform-compliance-api).

Regulatory context: mapping to compliance frameworks

- GDPR: Capture user_id, timestamp, and request identifiers to demonstrate lawful processing and support data subject access or deletion requests. Log redaction actions and retention updates as evidence of compliance.

- HIPAA: Record access trails for PHI-related prompts and responses, including who retrieved outputs and whether redaction or masking occurred. Ensure retention and access controls meet HIPAA audit requirements.

- SOC 2: Use Claude events as system activity evidence for availability, security, and change management controls; immutable timestamps and signed reports strengthen control assertions.

Map each regulatory requirement (access logging, retention, deletion, integrity) to specific event fields (user_id, request_id, response_hash, policy_flags, retention_state). This mapping is the foundation of regulatory AI compliance across frameworks.

Trend

Why auditing AI platform activity is accelerating

Regulators and customers now expect traceability for automated decision-making. The EU AI Act drafts and frameworks like NIST’s AI Risk Management Framework emphasize model-level documentation and auditability. As a result, organizations face increasing demands for model explainability, data lineage, and demonstrable control evidence. Enterprises need tools to continuously monitor LLM behavior and produce immutable evidence when asked — driving investment in audit pipelines and AI auditing tools that automate evidence collection.

Current state of AI auditing tools and Anthropic enterprise security

The market for AI auditing tools has matured from manual review scripts to platforms offering automated scanning, behavioral anomaly detection, and risk scoring. These tools:

- Parse prompt/response streams, redacting PII and hashing outputs for verifiable proofs.

- Score prompts and responses against policy templates (privacy, IP, safety).

- Integrate with SIEMs and GRC systems to correlate model events with wider security signals.

Claude’s Compliance API plugs into this ecosystem as a standardized telemetry source. Enterprises commonly forward Claude events to Elastic, Splunk, or cloud-native logging services and then layer AI auditing tools to flag suspicious patterns. For high‑assurance environments, streams are also written to immutable storage to support tamper-evident evidence chains.

Key metrics security teams are tracking

- Log completeness and latency: percentage of events delivered and time-to-ingest; gaps indicate blind spots.

- Event retention and tamper‑evidence: enforcement of retention policies and cryptographic controls for high‑risk logs.

- Rate of anomalous model outputs: alerts per million requests flagged by AI auditing tools for prompt injections, data exfiltration attempts, or policy violations.

- False positive/negative rates: precision and recall of the auditing models — essential for tuning alerting thresholds.

Monitoring these metrics helps teams prioritize fixes, tune detection models, and demonstrate control effectiveness to auditors.

Insight

How to audit Claude platform activity: a step-by-step framework

1. Configure data sources

- Enable Claude Compliance API at the organization level and assign an ingestion service account. Define scope: which models, endpoints, and teams must be captured. Use RBAC to prevent blind spots from service account proliferation.

2. Centralize and normalize logs

- Forward events into your SIEM, log lake, or a secure object store (immutable where required). Normalize to a baseline schema: user_id, request_id, timestamp, model_id, prompt_hash, response_hash, policy_flags, redaction_metadata, retention_state. Standardized fields accelerate queries and reporting.

3. Map events to compliance controls

- Build control matrices that map each event field to GDPR, HIPAA, and SOC 2 requirements. Flag records that touch personal or sensitive data and apply additional retention or access controls. For example, tag records as PHI when prompts contain healthcare identifiers and ensure extra audit trails for access.

4. Automate monitoring and alerting

- Use AI auditing tools to scan normalized events for anomalies: sudden spikes in token counts, repeated attempts to elicit confidential data, or prompt injection signatures. Implement both threshold-based alerts and behavioral models in your SIEM; feed outputs back into ticketing and incident response workflows.

5. Generate audit-ready reports

- Produce signed, time-stamped reports that include sampled events, response hashes, redaction details, and access logs. Store hashes of reports in immutable storage or a blockchain anchor if you need external tamper-proofing.

6. Validate and test controls

- Conduct regular red-team tests and synthetic transactions to validate coverage. Simulate privacy incidents and ensure your retention enforcement and immutable storage mechanisms meet policy.

Analogy: Treat Claude audit events like bank transaction records — each must be complete, timestamped, and attributable to an identity; without that, reconciliation and fraud detection become impossible.

Common pitfalls and mitigation

- Incomplete log coverage: Ensure service accounts, batch jobs, and private endpoints are included in the Compliance API scope. Run discovery scans and synthetic traffic to validate coverage.

- Poor field normalization: Adopt a strict schema and transform events on ingestion. Use a mapping layer to keep upstream changes from breaking downstream queries.

- Storing raw prompts with PII: Instead of saving full prompts, hash or redact sensitive tokens and maintain a secure mapping in a vault for forensic-only use.

Integrations and tooling patterns

- Feed Claude logs into Elastic, Splunk, or cloud logging (CloudWatch/Log Analytics), then layer AI auditing tools for automated analysis and risk scoring.

- Use serverless processors or stream transformations (Kafka, Kinesis) to normalize fields and enrich events with identity context.

- Integrate results with GRC platforms (ServiceNow, Archer) to automate evidence collection for audits.

For implementation patterns and example schemas, consult product documentation and the Claude platform compliance announcement (https://claude.com/blog/claude-platform-compliance-api). For broader governance frameworks, see NIST’s AI Risk Management materials (https://www.nist.gov/itl/ai).

Forecast

Where regulatory AI compliance is heading

- More prescriptive regulation: Expect requirements that mandate model-level traceability, provenance metadata, and demonstrable safety tests as part of compliance regimes (building on initiatives like the EU AI Act and NIST guidance).

- Standardized audit schemas: Vendors and regulators will converge on common event schemas to simplify cross-vendor compliance and reduce integration overhead.

- Continuous compliance: Real-time monitoring, automated remediation, and SLA-driven compliance will replace mostly reactive audit cycles.

These shifts mean audit pipelines must be near real-time, schema-stable, and integrated into incident response; static, periodic reports won’t satisfy future auditors.

How enterprises should prepare

- Standardize audit schemas now: Define canonical fields and retention rules before scaling. Schema drift is costly to correct later.

- Invest in AI auditing tools: Choose tooling that can scale with request volume and provide explainable reasons for flags to reduce false positives during audits.

- Build a 30/60/90 day plan: Start with enabling the Claude Compliance API and forwarding logs (0–30), normalize and map to controls (30–60), then automate reporting and run compliance exercises (60–90).

Strategic benefits of early adoption

Early standardization and integration yield faster audit response times, lower ongoing compliance costs, a stronger vendor risk profile, and tighter alignment with Anthropic enterprise security objectives. Teams that build continuous compliance pipelines can convert regulatory reporting from an annual headache into an operational KPI.

Future implication: As regulations codify supplier auditability, organisations that can produce immutable, well-indexed model telemetry will have competitive advantage during procurement and vendor risk assessments.

CTA

Immediate next steps (quickstart checklist)

- 0–30 days: Enable Claude Compliance API on your organization account, identify stakeholders (security, privacy, DevOps), and forward events to a secure endpoint. Verify ingestion and run discovery tests.

- 30–60 days: Normalize events, map fields to GDPR/HIPAA/SOC 2 controls, and configure SIEM alerts using AI auditing tools. Tune thresholds to reduce noise and document workflows for escalations.

- 60–90 days: Automate report generation (signed, time-stamped), run a compliance exercise (red-team + audit), and harden retention/immutability policies.

Resources and where to learn more

- Claude Platform Compliance API announcement: https://claude.com/blog/claude-platform-compliance-api

- NIST AI Risk Management Framework & guidance: https://www.nist.gov/itl/ai

- Suggested searches: \”Claude Compliance API examples\”, \”AI auditing tools integration\”, \”Anthropic enterprise security best practices\”

Final prompt to action

Request a demo or contact your security/compliance lead to schedule a 90‑day integration plan for Claude Compliance API and enterprise-grade auditing. Early adopters will not only meet regulatory AI compliance expectations more quickly but will also strengthen their Anthropic enterprise security posture and reduce incident response times.