rankllms

How Tech Visionaries Are Using Skill Measurement to Accelerate AI Agent Evolution

AI agent evolution is the arc from bots to agents — a transformation from scripted, reactive chatbots to context-aware, goal-directed systems that compose and ...

How to Validate JSON Data

Claude Code optimization is the practice of tuning Claude-powered code, prompts, and agent skills so they produce accurate, efficient, and safe outputs reliably. In ...

Ensuring Data Integrity with Schemas

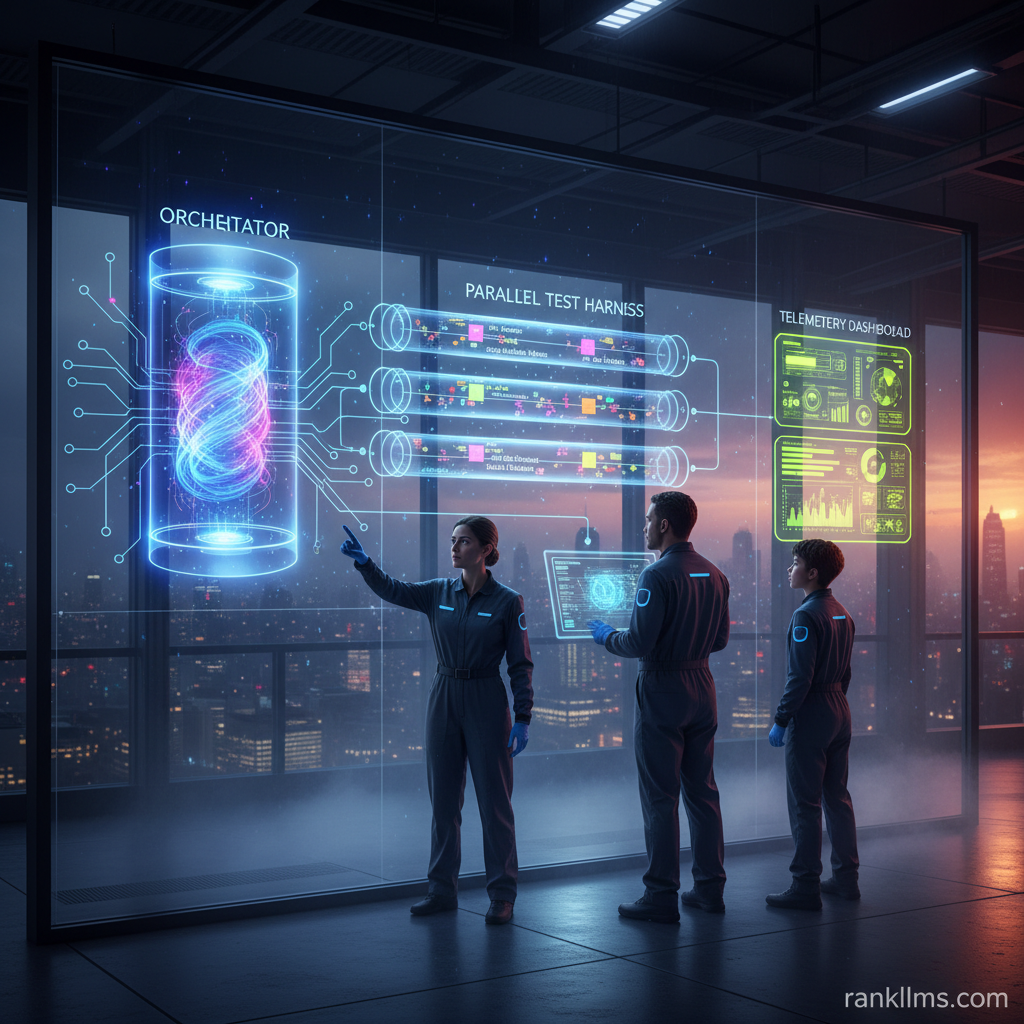

The modern AI development lifecycle is shifting from a \”prompt and pray\” approach to a repeatable \”test and refine\” methodology — teams build measurable, ...

The Hidden Truth About Skill Measurement Techniques for Reducing AI Agent Errors

AI agent error management is an operational discipline: it’s the repeatable set of practices you use to reduce incorrect outputs, unsafe actions, latency failures, ...

Key Trends Shaping AI Development

Scaling autonomous AI agents is no longer an experimental add-on — it’s a core engineering and product discipline for enterprises that expect reliability, safety, ...

Understanding JSON Schema

Agentic workflow optimization is now a practical engineering discipline: teams design, benchmark, and iterate autonomous agent skills and orchestration to improve accuracy, latency, and ...

Understanding JSON Schema Validation

Intro paragraph (quick answer / featured snippet): To build a high‑performance AI agent benchmarking suite, create a repeatable, automated evaluation pipeline that (1) defines ...

Ethical Considerations in Machine Learning

AI agent reliability is the baseline expectation for any production agent: predictable outputs, auditable decisions, and recoverable behavior when things go wrong. In this ...

Ensuring Schema Compliance

A practical guide to mastering Claude skill-creator for designing, testing, and measuring agent skills using Claude Code development techniques. Intro Quick answer (featured-snippet friendly)1. ...